The pixel, a portmanteau of “picture element,” is the fundamental building block of all digital imagery. Far from being a simple square of color, the modern camera pixel is a complex, microscopic photodetector that sits at the intersection of optics, semiconductor physics, and advanced computational processing. Its evolution has driven the entire digital imaging industry, transforming photography from a chemical process into a sophisticated data-capture science. This article explores the journey of the camera pixel, from its historical inception to its intricate architecture, the challenges of archiving its data, its role in determining capturing distance, and its final presentation on modern display technologies.

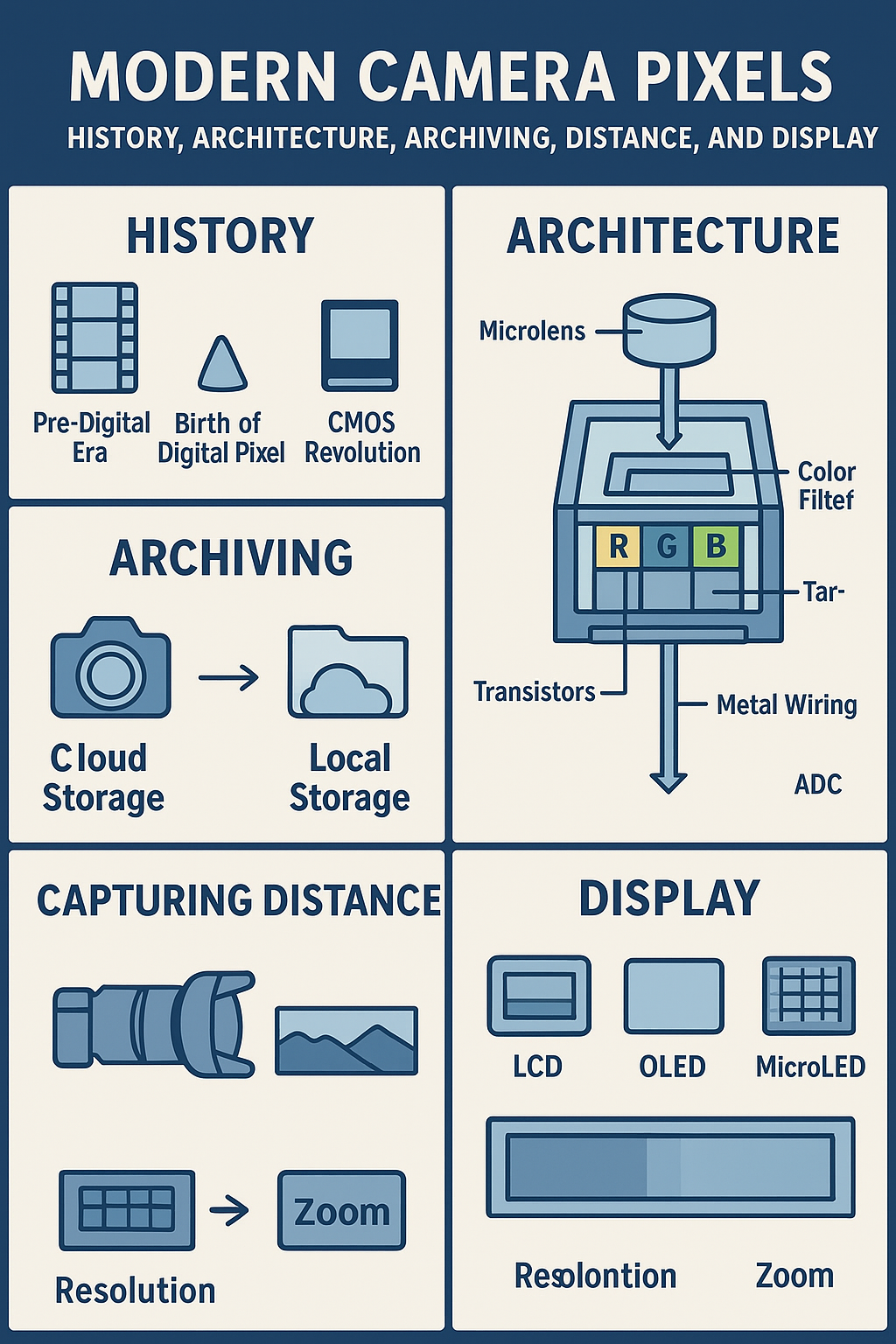

The Genesis of the Pixel: A Historical Overview

The concept of a discrete picture element predates digital photography by decades. The term “pixel” itself was first formally published in 1965 in the proceedings of the Society of Photo-Optical Instrumentation Engineers (SPIE) 1. This marked the beginning of a conceptual shift from continuous analog signals to quantifiable, addressable units of visual information.

The practical realization of the digital image sensor began in the late 1960s and early 1970s. Early sensors, such as those developed for NASA’s space programs, were rudimentary by today’s standards, often featuring resolutions as low as 100×100 pixels, or 10,000 total pixels 2. These early devices laid the groundwork for the two dominant sensor technologies that would emerge: Charge-Coupled Devices (CCD) and Complementary Metal-Oxide-Semiconductor (CMOS) sensors.

While CCDs dominated the early years of digital photography due to their superior image quality, the CMOS sensor eventually prevailed. CMOS technology offered lower power consumption, faster readout speeds, and, crucially, the ability to integrate processing circuitry directly onto the sensor chip. This integration capability was the key to the modern pixel’s complexity and performance.

The Architecture of Modern Pixels

The modern camera pixel is a marvel of miniaturization and engineering, built on the foundation of CMOS technology. At its core, a pixel is a photodiode that converts incoming photons (light) into an electrical charge. The architecture surrounding this photodiode determines the sensor’s performance, particularly in low light and high-speed scenarios.

Back-Side Illumination (BSI)

Traditional CMOS sensors, known as Front-Side Illuminated (FSI), place the metal wiring and transistors in front of the photodiode. This structure, however, partially obstructs the light, reducing the sensor’s efficiency. The development of Back-Side Illumination (BSI) technology solved this problem by flipping the sensor structure.

In a BSI sensor, the wiring layer is moved behind the photodiode, allowing light to strike the light-sensitive area directly 3. This results in:

•Improved Light Sensitivity: More photons reach the photodiode, significantly enhancing low-light performance.

•Reduced Noise: The stronger signal relative to the noise floor leads to cleaner images.

Stacked CMOS Architecture

The latest advancement is the Stacked CMOS sensor, which represents the pinnacle of current sensor design 4. This architecture takes the BSI concept further by separating the pixel array (the photodiodes) from the processing circuitry (logic chip) and stacking them vertically.

| Feature | Front-Side Illumination (FSI) | Back-Side Illumination (BSI) | Stacked BSI CMOS |

| Light Path | Obstructed by wiring | Direct to photodiode | Direct to photodiode |

| Processing | Integrated on sensor layer | Integrated on sensor layer | Separate, dedicated logic chip |

| Performance | Basic, slower readout | Improved low-light, faster readout | Fastest readout, best focus speed |

| Application | Older, budget sensors | Mid-range to high-end cameras | Flagship sports/video cameras |

The dedicated logic chip in the stacked design enables extremely fast data readout, which is essential for high-speed continuous shooting, 4K/8K video capture, and advanced features like fast autofocus and rolling shutter mitigation.

Pixel Data Archiving and Preservation

The process of archiving digital images is fundamentally about preserving the integrity of the captured pixel data. The choice of file format is critical, as it determines how much of the original sensor data is retained.

RAW vs. JPEG: The Data Difference

The primary distinction in digital archiving lies between RAW and JPEG formats.

•RAW Files: These files contain the unprocessed, minimally compressed data directly from the sensor’s photodiodes. A typical RAW file captures 12 to 14 bits of data per color channel 5. This vast amount of data provides superior color and tonal information, making it the preferred format for professional editing and long-term preservation.

•JPEG Files: These are processed, compressed files that discard data (lossy compression) to achieve smaller file sizes. JPEGs typically store only 8 bits of data per color channel 5. While convenient for sharing, the data loss makes them unsuitable for archival purposes where maximum image integrity is required.

Preservation Formats and Best Practices

For long-term digital preservation, institutions and professionals adhere to strict guidelines to ensure data remains accessible and unaltered for decades. The goal is to use open, non-proprietary, and lossless formats.

| Preservation Level | Recommended Format | Characteristics | Archival Suitability |

| Primary | TIFF (uncompressed) | Lossless, widely supported, stores all pixel data. | Excellent |

| Secondary | JPEG 2000 (lossless) | Modern, open standard, supports lossless compression. | Excellent |

| Tertiary | PNG | Lossless, good for web, but less common for professional photo archiving. | Good |

The best practice for archiving is to save the original RAW files (if available) and a high-quality, uncompressed TIFF master file derived from the RAW data. This dual-format approach ensures both the original sensor data and a universally accessible, lossless image are preserved.

The Pixel and Capturing Distance

While a single pixel records light intensity, the collective data from an array of pixels can be used to infer or directly measure the distance of objects in a scene. This is a crucial aspect of modern computational photography and machine vision.

Depth of Field and Pixel Size

In traditional photography, the physical size of the pixel (often referred to as pixel pitch) is inversely related to the depth of field (DOF) 6. Smaller pixels, common in smaller sensors, generally lead to a greater DOF at a given aperture, meaning more of the scene appears in focus. Conversely, larger pixels (found in full-frame sensors) require more precise focusing but offer superior light-gathering capabilities.

Range Imaging and Depth Sensing

Modern cameras and sensors are increasingly using range imaging techniques to assign a distance value to every pixel, creating a depth map. Technologies used for this include:

•Time-of-Flight (ToF): Measures the time it takes for a pulse of light (often infrared) to travel from the sensor to the object and back. The distance is calculated directly for each pixel.

•Structured Light: Projects a known pattern onto the scene and analyzes the distortion of the pattern to calculate depth.

•Stereo Vision: Uses two cameras separated by a known distance to triangulate the distance to objects, similar to human binocular vision.

In these systems, the pixel is no longer just a 2D coordinate (x, y) but a 3D data point (x, y, z), where ‘z’ is the measured distance from the camera 7.

From Sensor to Screen: Pixel Display Technology

The final stage in the life of a captured pixel is its presentation on a display. This process involves a complex mapping from the camera’s sensor data to the display’s physical pixels, often with significant differences in resolution and color space.

Pixel Mapping and Resolution Mismatch

When an image is displayed, the captured resolution rarely matches the display’s native resolution. This requires a process called resampling or scaling, where the image processor interpolates or averages the captured pixel data to fit the display’s pixel grid. If a high-resolution image is displayed on a low-resolution screen, the display must discard or average data, potentially losing fine detail. Conversely, displaying a low-resolution image on a high-resolution screen requires interpolation, which can introduce artifacts or a “softer” appearance 8.

Modern Display Technologies

The quality of the final image is heavily dependent on the display technology, which determines how accurately the captured pixel data is rendered.

| Display Technology | Core Mechanism | Key Advantage |

| OLED (Organic LED) | Each pixel is a self-illuminating diode. | Perfect blacks, infinite contrast, fast response time. |

| MicroLED | Microscopic inorganic LEDs for each pixel. | High brightness, long lifespan, no burn-in risk. |

| QD-OLED (Quantum Dot OLED) | Blue OLED panel with Quantum Dots for red/green conversion. | Combines OLED contrast with Quantum Dot color purity. |

These modern displays, with their ability to render deep blacks and wide color gamuts, allow the viewer to appreciate the full dynamic range and color depth captured by the advanced BSI and Stacked CMOS sensors.

Camera-Side Pixel Correction

It is also worth noting the camera-side function known as Pixel Mapping 9. This is a maintenance function where the camera identifies and digitally corrects “dead” or “hot” pixels—defective sensor elements that consistently output a black or white signal. The camera maps these defective pixels and uses the data from surrounding, functional pixels to interpolate and replace the faulty data, ensuring the integrity of the final image file.

Conclusion

The camera pixel is a dynamic and evolving technology that underpins the entire digital world. From its humble beginnings as a conceptual unit in 1965 to the intricate, multi-layered semiconductor architecture of today, the pixel has consistently pushed the boundaries of what is possible in image capture. Its journey—from photon conversion and high-speed processing to lossless archiving, 3D depth sensing, and final presentation on advanced displays—is a testament to the continuous convergence of physics, computer science, and art. As sensor technology continues to advance with even more complex stacking and computational techniques, the pixel will remain the most critical component in our ever-increasing quest for visual fidelity.

Be First to Comment