Introduction

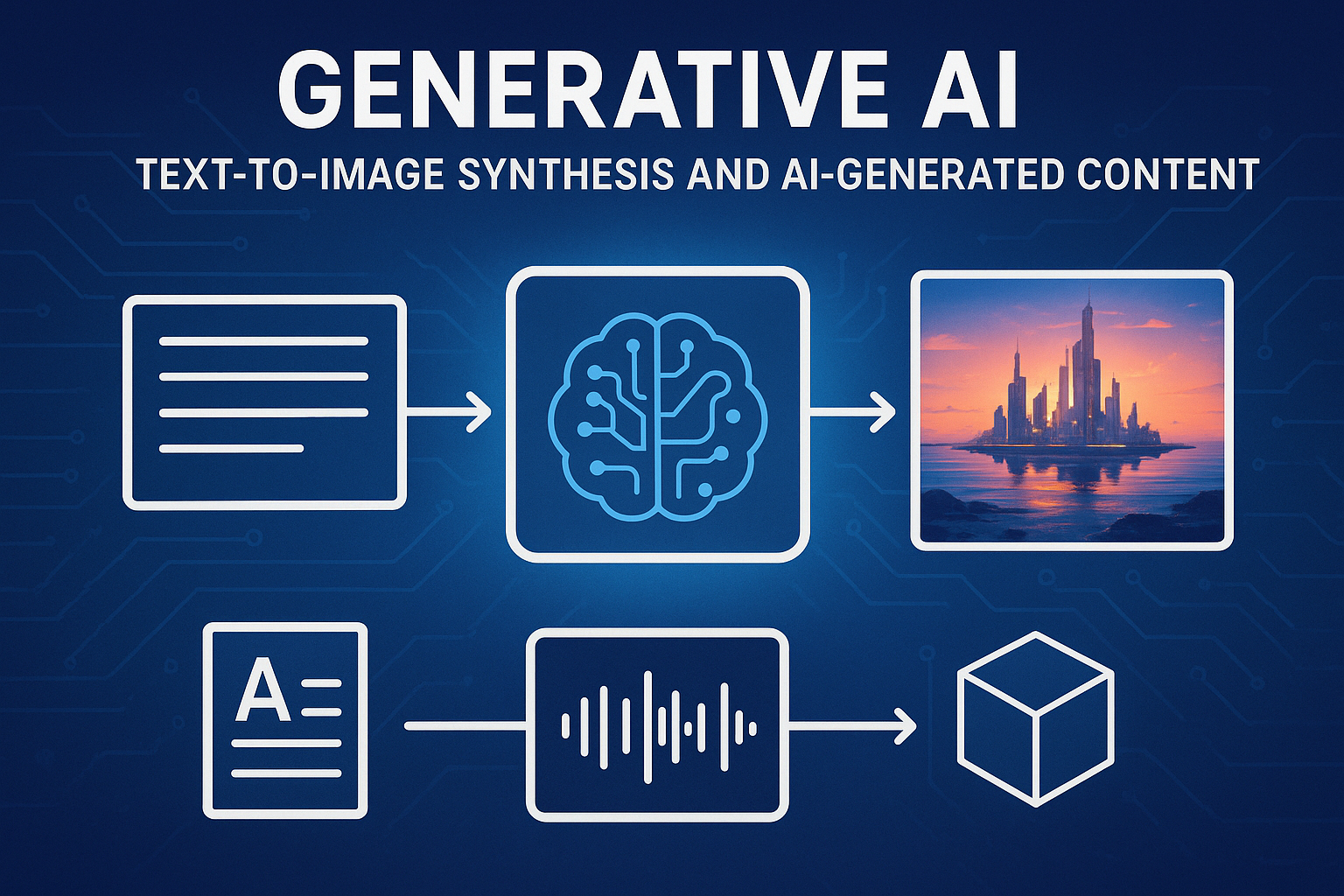

Generative artificial intelligence represents one of the most transformative technological developments of the 21st century. Unlike traditional AI systems that classify, recognize, or analyze existing data, generative AI creates entirely new content—from photorealistic images and coherent text to music, video, and code. At the heart of this revolution lies the ability to translate human language into visual reality through text-to-image synthesis, fundamentally changing how we create, communicate, and conceptualize creative work.

The journey from rule-based graphics to AI systems that can conjure detailed images from simple text prompts reflects decades of research in machine learning, computer vision, and natural language processing. Today, millions of people use generative AI tools daily, democratizing creative capabilities that once required years of technical training. This article explores the technology behind text-to-image synthesis, examines the broader landscape of AI-generated content, and considers the profound implications for creativity, industry, and society.

The Foundation: Understanding Generative Models

What Makes AI Generative?

Generative AI systems learn patterns from vast datasets and use this knowledge to create new, original outputs that resemble but don’t duplicate their training data. The key distinction lies in their ability to generate rather than merely recognize or classify. While a traditional computer vision system might identify a cat in a photograph, a generative system can create an entirely new image of a cat that never existed.

This capability emerges from sophisticated mathematical models that learn the underlying probability distributions of their training data. By understanding what makes an image look like a cat, a landscape, or an abstract painting, these models can sample from learned distributions to produce novel examples.

Neural Networks and Deep Learning

The generative AI revolution builds on neural networks—computing systems loosely inspired by biological brains. Deep learning, which uses neural networks with many layers, proved particularly effective at capturing complex patterns in data. Each layer extracts increasingly abstract features: early layers might detect edges and textures, while deeper layers recognize objects, scenes, and semantic relationships.

The breakthrough came when researchers developed architectures specifically designed for generation rather than classification. These networks don’t just learn to recognize patterns—they learn to create them.

Text-to-Image Synthesis: From Words to Pixels

The Core Challenge

Converting text descriptions into images presents a uniquely difficult problem. Language operates at a high level of abstraction, while images exist as grids of pixels with precise color values. A simple prompt like “a sunset over mountains” leaves countless details unspecified: the exact mountain shapes, cloud formations, color gradients, lighting conditions, and atmospheric effects. The AI must not only understand the semantic meaning of the text but also make countless creative decisions to produce a coherent, visually appealing result.

Furthermore, text-to-image systems must handle ambiguity, understand spatial relationships, apply consistent lighting and physics, and maintain artistic coherence—all while processing the nuanced, often imprecise nature of human language.

Generative Adversarial Networks (GANs)

GANs, introduced in 2014, represented an early breakthrough in image generation. This architecture pits two neural networks against each other in a creative competition. The generator network creates images, while the discriminator network evaluates whether images are real (from the training dataset) or generated. Through this adversarial process, the generator learns to create increasingly convincing images that can fool the discriminator.

Early GANs could generate faces, objects, and simple scenes, but struggled with text conditioning and high-resolution outputs. They also suffered from training instability and mode collapse, where the generator would produce limited varieties of output. Despite these limitations, GANs demonstrated that neural networks could create photorealistic images and inspired subsequent innovations.

Variational Autoencoders (VAEs)

VAEs approach generation differently by learning to compress images into a compact latent representation and then reconstruct them. This latent space becomes a continuous mathematical space where similar concepts cluster together. By learning this structured representation, VAEs can generate new images by sampling from regions of latent space.

VAEs provide stable training and smooth interpolation between concepts but traditionally produced blurrier outputs than GANs. However, they became crucial components in later text-to-image architectures because of their ability to create meaningful, manipulable latent spaces.

The Transformer Revolution

The attention mechanism and transformer architecture, originally developed for natural language processing, revolutionized generative AI. Transformers excel at capturing long-range dependencies and relationships in data, whether that data is text, images, or other modalities.

By processing inputs as sequences of tokens and using self-attention to weigh the importance of different parts of the input, transformers can understand context and relationships in sophisticated ways. This capability proved essential for bridging the gap between language and images.

Diffusion Models: The Current State-of-the-Art

Diffusion models represent the dominant approach in modern text-to-image systems. These models work through a denoising process: they learn to gradually remove noise from random static until a coherent image emerges, guided by a text prompt.

The training process involves two phases. First, the model learns how noise gradually corrupts clean images over many steps. Then, it learns to reverse this process—starting from pure noise and iteratively removing it to reveal an image matching the text description. During generation, the text prompt guides the denoising process, steering it toward images that match the description.

This approach offers several advantages: high-quality outputs, stable training, fine-grained control during generation, and the ability to scale to very large models. Diffusion models power current leaders in text-to-image synthesis, including Stable Diffusion, DALL-E, and Midjourney.

Latent Diffusion Models

A crucial optimization came with latent diffusion models, which perform the diffusion process in a compressed latent space rather than pixel space. This dramatically reduces computational requirements while maintaining output quality. By using a VAE to compress images into latent representations, performing diffusion in this compressed space, and then decoding back to pixels, these models achieve impressive results with manageable computational costs.

This efficiency made text-to-image generation accessible beyond large research labs, enabling open-source implementations and consumer-grade applications.

Major Text-to-Image Platforms

DALL-E Series

OpenAI’s DALL-E, named playfully after surrealist artist Salvador Dalí and the Pixar robot WALL-E, pioneered widely accessible text-to-image generation. DALL-E 2, released in 2022, demonstrated remarkable capabilities in understanding complex prompts, manipulating concepts, and maintaining consistent style and composition.

The system excels at combining disparate concepts, understanding spatial relationships and attributes, and applying artistic styles. DALL-E 3, integrated with ChatGPT, further improved prompt understanding and image quality while reducing common artifacts and errors.

Midjourney

Midjourney distinguished itself through exceptional artistic quality and aesthetic sensibility. Operating through Discord, it cultivated a community-driven approach where users share, iterate, and inspire each other. The platform particularly excels at artistic and stylized imagery, painterly and illustrative aesthetics, and atmospheric and cinematic compositions.

Midjourney’s evolution through multiple versions demonstrated rapid improvement in coherence, detail, and prompt adherence, making it popular among artists, designers, and creative professionals.

Stable Diffusion

Stable Diffusion, developed by Stability AI in collaboration with researchers, took a different approach by releasing their model as open-source. This democratization allowed developers to create custom applications, researchers to study and improve the technology, and users to run models locally without cloud dependencies.

The open ecosystem sparked innovation in custom fine-tuning, specialized models for particular domains, integration into existing creative software, and novel applications and interfaces. Variants like Stable Diffusion XL pushed quality and resolution even higher.

Other Notable Platforms

Adobe Firefly integrated generative AI directly into creative workflows with a focus on commercially safe training data. Google’s Imagen demonstrated strong photorealism and prompt understanding in research contexts. Various specialized tools emerged for architecture, fashion, product design, and other domains.

Beyond Images: The Expanding Landscape of AI-Generated Content

Text Generation

Large language models represent perhaps the most widely adopted form of generative AI. Systems like GPT-4, Claude, and others can generate coherent, contextually appropriate text across numerous applications: content creation and copywriting, code generation and debugging, translation and summarization, conversational AI and assistance, and creative writing and brainstorming.

These models learn from vast text corpora, capturing patterns in language structure, knowledge relationships, reasoning approaches, and stylistic variations. The scale of these models—often containing hundreds of billions of parameters—enables sophisticated understanding and generation.

Video Generation

Text-to-video represents the natural extension of image synthesis, adding the temporal dimension. Recent systems like Sora from OpenAI, Runway’s Gen-2, and Pika demonstrate the ability to generate coherent video clips from text descriptions, maintaining consistency across frames, simulating realistic physics and motion, and handling complex scene dynamics.

Challenges include maintaining temporal coherence, generating longer sequences, ensuring consistent object and character appearance, and managing computational requirements. Despite these hurdles, video generation rapidly advances toward practical applications in content creation, prototyping, and education.

Audio and Music Generation

Generative AI extends to audio domains with impressive results. Music generation systems create original compositions in specified styles or moods. Text-to-speech synthesis produces natural-sounding voices from written text. Voice cloning replicates specific voices from audio samples. Sound effect generation creates audio to match visual content or descriptions. Audio editing tools remove noise, enhance quality, and manipulate content.

These capabilities find applications in entertainment, accessibility, content localization, and creative production.

3D and Spatial Content

Generating three-dimensional content presents additional complexity but opens new possibilities. Current systems can create 3D models from text descriptions or images, generate virtual environments and scenes, design architectural concepts and interiors, and create assets for games and simulations.

Text-to-3D typically works by generating multiple 2D views and reconstructing 3D geometry or directly optimizing 3D representations guided by text. Applications span gaming, virtual reality, product design, and architectural visualization.

Code Generation

AI code generation assists programmers by translating natural language descriptions into functioning code, completing code based on context, debugging and explaining existing code, and suggesting improvements and optimizations.

GitHub Copilot, based on OpenAI’s Codex, demonstrated the viability of AI programming assistance. These tools accelerate development, lower barriers to programming, and enable more natural human-computer interaction.

Technical Deep Dive: How Text-to-Image Systems Work

Training Process

Creating a text-to-image model involves several stages. Data collection assembles millions or billions of image-text pairs from the internet and other sources. Preprocessing cleans, filters, and standardizes images while parsing and encoding text descriptions. Model architecture design determines network structure, parameter count, and training objectives.

The training phase teaches the model relationships between text and images, visual composition and aesthetics, and world knowledge and common sense. Training large models requires massive computational resources—often thousands of GPUs running for weeks or months.

The Role of CLIP

OpenAI’s CLIP (Contrastive Language-Image Pre-training) proved crucial for modern text-to-image systems. CLIP learns to connect images and text by training on image-text pairs, learning shared embedding spaces where similar concepts cluster together, and enabling semantic image search and similarity measurement.

Many text-to-image systems use CLIP or similar models to encode text prompts into representations that guide the generation process. CLIP’s multimodal understanding bridges the language-vision gap effectively.

Prompt Engineering

The quality and specificity of text prompts dramatically affect outputs. Effective prompt engineering includes clear subject description, style and medium specification, lighting and atmosphere details, composition and framing guidance, and quality and detail modifiers.

Advanced techniques include weighting terms to emphasize importance, negative prompts to specify what to avoid, prompt chaining for iterative refinement, and style references for consistency. The art and science of prompt engineering has emerged as a valuable skill.

Controlnets and Conditioning

Beyond text, modern systems accept additional conditioning inputs for finer control. ControlNet and similar techniques allow edge maps to guide composition, depth maps for spatial structure, pose references for character positioning, and segmentation maps for layout control.

This multi-modal conditioning enables precise creative control while maintaining the flexibility of text-to-image generation.

Inpainting and Outpainting

Inpainting modifies specific regions of an image while preserving the rest, enabling object removal or replacement, fixing errors or artifacts, and style-consistent editing. Outpainting extends images beyond their borders, maintaining consistency and coherence while adding new content.

These capabilities transform text-to-image systems from one-shot generators into iterative creative tools.

Applications and Use Cases

Creative Industries

Generative AI impacts creative fields significantly. Concept artists use AI for rapid ideation and exploration. Graphic designers incorporate AI in workflows for efficiency. Illustrators leverage AI for backgrounds, references, and variations. Film and game studios use AI for pre-visualization, asset creation, and concept development.

The technology accelerates creative processes, enables exploration of more alternatives, and allows focus on high-level creative decisions while AI handles execution details.

Marketing and Advertising

Marketing departments embrace generative AI for personalized ad creative at scale, rapid A/B testing of visual concepts, product visualization and mockups, social media content generation, and campaign ideation and prototyping.

The speed and cost-effectiveness make previously impractical creative experimentation feasible.

Education and Research

Educational applications include visualizing historical events and scientific concepts, creating custom illustrations for teaching materials, generating practice problems and examples, and accessibility aids for visual learners.

Researchers use generative AI to visualize complex data and simulations, generate training data for other AI systems, prototype experimental designs, and explore creative possibilities in their fields.

E-commerce and Retail

Online retail benefits from product photography alternatives, virtual try-on and customization, lifestyle context images, seasonal variations without reshooting, and personalized marketing materials.

These applications reduce costs while increasing visual content variety.

Personal and Hobbyist Use

Individual creators use text-to-image AI for personal art projects, social media content, game development, writing illustration, gift creation, and creative exploration.

The democratization of creative tools empowers non-professionals to realize visual ideas previously requiring specialized skills.

Challenges and Limitations

Quality and Consistency Issues

Despite impressive progress, limitations remain. Common problems include anatomical errors in human and animal figures, inconsistent details across an image, text rendering difficulties within generated images, and challenges with specific object interactions.

Multiple generations and careful prompt engineering often prove necessary to achieve desired results.

Computational Requirements

High-quality generation demands significant computing resources. Training models requires infrastructure beyond most organizations’ reach. Even inference can strain consumer hardware, though optimizations continue improving accessibility.

Cloud-based services mitigate this but introduce dependency on external platforms.

Bias and Representation

AI models reflect biases present in training data, leading to stereotypical representations, uneven quality across demographics, cultural biases in style and content, and underrepresentation of certain groups.

Addressing these biases requires careful dataset curation, bias mitigation techniques, diverse development teams, and ongoing monitoring and adjustment.

Prompt Sensitivity

Small prompt variations can produce dramatically different results, making achieving specific visions challenging. This sensitivity requires iteration, experimentation, and developing prompt engineering expertise.

Copyright and Training Data

Legal questions surround the use of copyrighted material in training datasets. Artists and creators raise concerns about their work training systems that might compete with them. Courts and legislatures worldwide grapple with these issues, with outcomes remaining uncertain.

Ethical Considerations

Misinformation and Deepfakes

The ability to generate photorealistic images raises serious concerns about misinformation, evidence fabrication, defamation through fake imagery, and erosion of trust in visual media.

Detection tools, watermarking, and media literacy education attempt to address these risks, but the arms race between generation and detection continues.

Artistic Attribution and Value

Generative AI challenges traditional notions of authorship, creativity, and artistic value. Questions arise about who owns AI-generated content, whether AI creation constitutes art, how to value AI-assisted work, and the impact on professional artists’ livelihoods.

These philosophical and practical questions require ongoing dialogue among creators, technologists, legal experts, and society.

Labor Market Impacts

Automation of creative tasks may displace some workers while creating new roles. The transition requires workforce adaptation, education and retraining programs, and consideration of safety nets for affected workers.

Some view AI as a tool augmenting human creativity rather than replacing it, but impacts vary by role and context.

Access and Inequality

While generative AI democratizes some creative capabilities, it also risks creating new divides. Those with resources access more powerful tools, expertise in prompt engineering creates advantages, and digital literacy requirements may exclude some populations.

Ensuring equitable access and benefits requires intentional policy and platform design choices.

Environmental Concerns

Training and running large AI models consumes substantial energy, raising environmental concerns. The carbon footprint of AI development prompts discussion about sustainable practices, model efficiency, and responsible deployment.

The Future of Generative AI

Technical Advancements

Ongoing research pursues numerous improvements: higher resolution and quality, better prompt understanding and following, improved consistency and controllability, reduced computational requirements, multi-modal integration, and longer-form content generation.

Next-generation models will likely address current limitations while introducing new capabilities.

Personalization and Customization

Future systems may offer personalized models trained on individual preferences, fine-tuned models for specific domains or styles, adaptive systems learning from user feedback, and collaborative AI-human creative processes.

This personalization could make AI tools more effective and aligned with individual needs.

Integration into Creative Workflows

Rather than standalone tools, generative AI increasingly integrates into existing creative software, enabling seamless workflows, combining traditional and AI techniques, and maintaining creative control while leveraging AI capabilities.

This integration makes AI a natural part of creative processes rather than a separate step.

Real-time Generation

Improving efficiency may enable real-time or near-real-time generation, supporting interactive applications, live customization, and dynamic content adaptation.

Real-time capabilities would expand applications in gaming, virtual reality, and interactive media.

Regulatory Frameworks

Governments and institutions develop frameworks addressing copyright, liability, transparency requirements, and safety standards.

These regulations will shape how generative AI develops and deploys commercially.

New Creative Paradigms

Generative AI may enable entirely new forms of creative expression, interactive and adaptive art, personalized experiences at scale, and collaboration between human creativity and machine capability.

The technology invites reimagining what creativity means and how humans and AI might create together.

Conclusion

Generative AI, particularly text-to-image synthesis, represents a fundamental shift in how we create visual content. The ability to conjure detailed images from simple text descriptions seemed like science fiction just years ago but has become accessible to millions.

This technology democratizes creative capabilities, accelerates creative processes, and opens new possibilities for expression and communication. It also introduces challenges around authenticity, attribution, bias, and impact on creative professions.

As generative AI continues evolving, society must navigate complex questions about creativity, authorship, labor, and the relationship between human and machine intelligence. The technology itself is neither inherently beneficial nor harmful—outcomes depend on how we develop, deploy, and govern it.

The most promising path forward involves viewing generative AI not as a replacement for human creativity but as a powerful tool augmenting human imagination and capability. By combining human insight, judgment, and creative vision with AI’s computational power and pattern recognition, we can achieve results neither could produce alone.

The revolution in AI-generated content has only begun. As models improve, applications multiply, and integration deepens, generative AI will increasingly shape how we create, communicate, and understand visual and textual information. Navigating this transformation thoughtfully—balancing innovation with responsibility, access with safety, and capability with ethics—represents one of the defining challenges and opportunities of our time.

The conversation about generative AI continues evolving as rapidly as the technology itself. Artists, technologists, policymakers, and users all have roles in shaping a future where these powerful tools benefit humanity while respecting creativity, truth, and human dignity. The images and content we generate today offer just a glimpse of what tomorrow’s AI-assisted creativity might achieve.

Be First to Comment